The DfE should reject the FELTAG recommendations in order to ensure that all the same mistakes are not repeated by ETAG

The DfE should reject the FELTAG recommendations in order to ensure that all the same mistakes are not repeated by ETAG

At the same time as the Further Education Learning Technology Action Group (FELTAG) got ready to submit its recommendations to government for action to support ed-tech in Further Education, a new group was set up to propose similar recommendations that would cover all education sectors. But the Education Technology Action Group (ETAG) has inherited all of the same flawed assumptions that were made by FELTAG and by BECTA before them. If Matt Hancock wants to be the man who ends the long history of failed government initiatives and the man who helps introduce genuine, transformative education technology to the UK, he needs to insist that the government is given a much clearer and more convincing rationale for action than the FELTAG report has offered.

Background

When the UK coalition government came into office in May 2010, one of the first things that it did was to light its bonfire of the quangos (quasi autonomous non-governmental organisations, which in most cases mean government agencies). One of these was the British Educational Commuications and Technology Agency, BECTA—and when BECTA went, so did the government’s active involvement in promoting technology in education.

The government’s position in this respect (and it is one that I largely agreed with) was that most of what Becta did was wasteful and ineffective and that if there was to be a future for education technology, then we were much more likely to get there quickly if the market was allowed to lead. The trouble with this policy has always been that there is not much money in education to tempt the red-blooded entrepreneur, particularly as government remains the ultimate customer and cannot help interfering (as it continues to do through it’s misjudged procurement frameworks). So the predictable response to the government’s withdrawal from ed-tech has been a deafening silence, broken only by the distant sounds of government initiatives in America repeating most of Becta’s mistakes, 10 years later.

Whatever you think of Michael Gove, no-one can accuse him of not being prepared to own up to mistakes. In a world in which most politicians angrily denounce each other across the chamber and tie themselves in oratorical knots rather than admit any culpability for anything at all, Michael Gove quite regularly turns up at the dispatch box in the House of Commons as a Roman Catholic might enter the confessional box. So it was that the DfE admitted that it had over-estimated the market’s ability to innovate by itself, and asked for advice on what it could do to amend matters. Matt Hancock, Junior Minister at the Department for Business, Innovation and Skills (also responsible for Higher Education) was given the brief, with responsibility both to David Willets, Secretary of State at BIS, and Michael Gove at the DfE.

The first stage of the project was to form the Further Education Learning Technology Action Group (FELTAG) in January 2013. In April 2014, this produced its final report , to which the Department for Education is expected to respond this week.

In the meantime, on 23 January 2014, Matt Hancock made an admirable speech at BETT, arguing that we should welcome the new sorts of education technology that would support learning and at the same time maintain rigour. In this speech, he announced the establishment of a new Education Technology Action Group (ETAG), which was to repeat the exercise undertaken by FELTAG with respect to all sectors of education and not just to FE.

In their own report, this decision was interpreted by the authors of the FELTAG report as an endorsement of their own success:

The success of FELTAG led to the formation of a cross-departmental, ministerial learning technology implementation team (the Education Technology Action Group, ETAG)…[which] will explore the potential to replicate FELTAG’s success across the education systemIt might be thought a little gonflé and certainly premature of the FELTAG authors to congratulate themselves on the success of their report even before they had finished writing it—and I hope that they will be proved mistaken in their optimism.

It was certainly true that the ETAG group has carried on from where FELTAG left off. Not only did it inherit a number of FELTAG participants (8 out of the 17 members of ETAG were previously participants in FELTAG)—but more crucially, it also inherited many of its assumptions, which, as I shall argue below, are poorly founded.

Its new chairman, Professor Stephen Heppell, decided to proceed on the basis of a “crowd-sourced” consultation using Twitter. This started on 23 April and is due to last until 23 June.

This post will argue that:

- FELTAG produced a weak report which ought on its own merits to be rejected by the DfE;

- the wholesale transfer of assumptions from FELTAG to ETAG has meant that the members of ETAG are failing to address their own remit;

- ETAG’s Twitter-based consultation exercise was misconceived and has already proved to be a failure.

I will finish by proposing how I think that ETAG should move forwards in a way which will fulfill its original remit and help underpin a useful new approach to education technology.

The key features of the flawed consensus

The fundamental problems, both with FELTAG and ETAG and with most of the other ed-tech reports of a similar kind, are as follows.

- They fail to address explicitly the fact that, in spite of considerable investment over the past two decades, there is no empirical evidence that ed-tech has had any significant impact on education. This does not mean that we should not be very interested in the potential of ed-tech; but it does mean that we should be very cautious about asserting how it will work. Nothing can be taken for granted; everything needs to be scrutinized carefully and contrary opinion considered carefully. Yet all of these reports assert positions based on the flimsiest of rationales and consistently fail to engage with contrary positions.

- They regard technology indiscriminately as a sort of commodity—like a huge vat of peanut butter—which contains various sorts of hardware (particularly smartphones and tablets), sprinkled with some generic software and cloud services, like Twitter or Facebook. They do not recognize that technology is at heart all about process and not about things; they do not recognize that there are different technologies appropriate to different requirements and professions; or that that these different requirements correspond to different, vertical markets for sector-specific technology products; or that in the case of education, the market for sector-specific technology barely exists.

- They frequently confuse two completely separate requirements: one being about what we should teach students about technology; and the other being to use technology to improve education across the curriculum, for teaching whatever curriculum society, parents and learners deem appropriate. So ed-tech is often justified in terms of promoting so-called “twenty-first century skills” or “digital literacy”, which are matters for the curriculum and not for pedagogy.

- They assume that the only people who can create innovative new approaches to education are teachers and their advisers and trainers. For this reason, the sort of people who would be able to build new markets for education-specific technology (software developers, statisticians, entrepreneurs) are not included in their deliberations.

- The assume that learning is an intrinsic good. In fact, left to themselves, people are only too quick to learn false information or behaviours which may be violent, addictive, self-indulgent or just lazy. Education is not just about learning, it is about learning the right things and about not learning the wrong things. This requires direction. The word itself comes from the Latin, educare, which means “to lead”. The relevance of this fallacy is that education technology is often advocated on the grounds that it encourages informal learning, e.g. through social networks, which rests on the assumption that all learning is good.

The FELTAG report

FELTAG’S unexamined assumption

The first problem with the FELTAG report is its definition of learning technology, which is taken from the Association for Learning Technology. This runs as follows.

Learning technology is the broad range of communication, information and related technologies that can be used to support learning, teaching, and assessment .The trouble with this definition is that any technology can be used to support learning, teaching and assessment. If I wanted to learn about missile guidance systems, then missile guidance systems would doubtless be a very useful sort of learning technology under this definition. Right from the start, this means that the report makes no distinction between different types of technology. It admits of no term to represent the technology that is developed specifically to meet the education requirement which in the main is what we lack. And not having a word for it, the reader who has not thought about these matters carefully is liable not to notice its absence.

Since all technology is classified as learning technology and almost none of this technology was designed specifically for learning, a heavy onus is place on teachers to exploit the technological resources that they can inherit (or in the words of Diana Laurillard, “appropriate”) from other horizontal markets.

In this way, the report settles on its conclusion (that the problem lies with teacher training and not with technical development) in its very first sentence and without considering any possibility of a counter-argument.

The executive summary

This unexamined assumption is consequently expounded through the rest of the Executive Summary in the form of six points.

The sector has to keep abreast of change

The report concludes that it is for “policy-makers, teachers, governors, and managers” to understand the increasingly rapid changes in IT and to exploit these opportunities for the benefit of education. This is clearly unrealistic, when very few “policy-makers, teachers, governors, and managers” have any experience, expertise or often interest in IT.

Procurement must be appropriate and agile.

You might expect that this section would be the one to prove my argument wrong, the section in which FELTAG emphasizes the importance of procurement and shows that it understands the need to buy in the fruits of external expertise, as applied to the task of creating education-specific technologies. If only. Unfortunately the paragraph is all about “investment in technological infrastructure”—hardware, networking and connectivity. It does not mention the procurement of ed-tech.

Regulation and funding must not inhibit innovation and its effectiveness in improving learners’ outcomes

FELTAG believes that innovation will be led by teachers and lecturers, even though there is no evidence whatsoever that repeated attempts to do this in the past have had any beneficial effect at all. By bracketing “innovation” and “effectiveness” in the same phrase, it fails to recognize that even properly resourced innovation is extremely risky and fails more often than not.

The entire workforce has to be brought up to speed to fully understand the potential of learning technology.

There is no evidence for the potential for learning technology and, without evidence, training is merely a euphemism for indoctrination. This is supported by the fact that large amounts were spent on a wide range of training programmes under the last government, with no evidence whatsoever of positive impact. It ignores the fact that previous experience has shown that training teachers to use generic forms of technology has proven to be ineffective, or that by analogy with other forms of technical advance (such as the introduction of mobile technology, for example) it is almost never seen that these require the widespread training of consumers. It is well-designed technology that disrupts, technology that works “out of the box”, not cumbersome training programmes.

Relationships between the FE community and employers should become closer and richer, and enhanced by learning technology inside and outside the workplace.

However desirable this objective might be, it displays the same old confusion between the need to train students how to use the technology that they are likely to find in the workplace (i.e. the subject of their courses), and the need to use technology to improve learning. Where training occurs in the workplace, then of course education technology will be needed that supports the close working relationship between college and workplace—but this comes back to the procurement of suitable technology and not to the ad hoc development of better relationships.

Learners must be empowered to fully exploit their own understanding of, and familiarity with digital technology for their own learning.

If we don’t need new sorts of education-specific technology, and if the teachers cannot exploit generic technologies to produce positive outcomes in the classroom, then maybe learners can do it for themselves? But there is no evidence to suggest that Twitter and Facebook have every made a significant contribution to supporting the objectives of formal learning, beyond the occasional, informal use for which they are already available.

FELTAG’s absence of evidence

The report then proceeds to paint an extremely optimistic picture of the progress being made by teachers.

From the teacher survey, we know that they are doing the innovation, and there are many examples in the sector of highly effective and innovative use of digital learning technology.Neither of the two surveys on which this account is based is published. There is no survey data, no information about the samples or how they were selected, no use cases and no evidence of positive outcomes. It is an entirely evidence-free zone.

This section concludes that:

the key to adapting to disruptive innovation…is not technology. It is to put people ahead of strategy.I am not sure what it means “to put people ahead of strategy”, other than that a properly justified strategy is not required. Maybe that explains FELTAG’s fuzzy reasoning and why the assertion that technology is not important is not supported by any evidence at all, but was assumed to be true from the very start of the report.

I am very happy to believe that this conclusion reflects the opinions of teachers, who see themselves as the gatekeepers of education, who tend to see teaching as a matter of personality and relationship rather than process, and who generally have little or no understanding of technology. It is a conclusion that is not supported by numerous examples from history, which consistently show that it is the technology that explains lasting change and very rarely the people.

FELTAG’S recommendations

The final part of the report puts forward a long list of 39 recommendations, such as to:

- encourage the development of programmes to professionalize FE governors, principals’, managers’ and teachers’ use of learning technology, building on the best current models;

- raise profile of appropriate and relevant accreditation schemes, such as ALT’s CMALT, which accredits the learning technology capability of staff, and the RAPTA self-assessment tool, which assesses how effectively an organisation uses learning technology;

- colleges to simultaneously run a national annual ‘Learning Tech Development Day’, centrally-coordinated by Jisc’s RSCs with streamed Ministerial input;

- identify public sector online learning content that could be useful for functional skills and make this available under an OGC licence;

- mandate the inclusion in every publicly-funded learning programme from 2015/16 of a 10% wholly-online component, with incentives to increase this to 50% by 2017/2018. This should apply to all programmes unless a good case is made for why this is not appropriate to a particular programme.

In short, FELTAG recommends a return to the days when a bonanza of central government spending ended up in the pockets of consultants and trainers (just like the members of FELTAG), without delivering any measurable benefit to learners.

The transfer of assumptions from FELTAG to ETAG

While FELTAG assumed that the establishment of ETAG represented an endorsement of their own success, the opposite interpretation seems to me to be rather more plausible: that seeing the FELTAG car-crash coming down the road, Ministers set up ETAG in order to allow them to reject FELTAG without ruffling too many (fairly easily ruffled) feathers. “Thank you”, they will say, “for those very interesting recommendations—now let us see how ETAG recommends that we carry all those ideas forwards in practice”.

But if ETAG was meant to give the ed-tech community a second chance to say something sensible, then it is not working out very well, in spite of the fact that they were given a very helpful steer by ministers. The kick-off website, launched by Stephen Heppell in February 2014, explained the group’s remit as follows:

Our remit said “The Education Technology Action Group ETAG will aim to best support the agile evolution of the FE, HE and schools sectors in anticipation of disruptive technology for the benefit of learners, employers & the UK economy” …and we have also been asked to identify any barriers to the growth of innovative learning technology that have been put in place (inadvertently or otherwise) by the Governments, as well as thinking about ways that these barriers can be broken down.On the same page, ETAG’s mission is illustrated with a graphic that had been originally produced by Bryan Mathers for FELTAG, which was copied into the ETAG base documentation, and which has been subsequently widely quoted in the Twitter conversation.

It is worth pointing out, in passing, that learning does not need to be fun—it needs to be effective—and these don’t necessarily go together, as Tom Bennett has recently argued.

The more important point is that, as a mission statement, this graphic imports from FELTAG the assumption that it is all about “enabling innovation with [generic] (‘I am a smart device’) technology” and not through the production of new education-specific technology; and that this innovation with technology will occur by empowering teachers and learners, not software developers and entrepreneurs. There is no mention at all of industry, which in one shape or another is normally what creates innovative technology, either in response to or in anticipation of user demand. This is despite the fact that ETAG’s remit is perfectly clear that its job is to recommend action “in anticipation of disruptive technology” and “to identify any barriers to the growth of innovative learning technology”.

ETAG was asked to consider how to encourage the emergence and deployment of new forms of innovative education technology by expert developers; but instead they have chosen to look at the opportunities for pedagogical innovation in the classroom by teachers, using the same old generic technology. Where the remit talks about “disruptive technology”, ETAG talks about “disruptive innovation”. They have misunderstood what they are supposed to be doing.

The Twitter based conversation has failed

When ETAG was established, Stephen Heppell announced a “crowd-sourced”, Twitter-based consultation exercise, in which everyone would be invited to contribute. It was an innovative idea and given (as I suggest above) that innovation fails 9 times out of 10, no-one should be surprised that it has not worked.

Part of the problem was that it was mis-sold. A repeated theme in the many calls for participation was that teachers should contribute their experiences of “what works”.

Only a very few people responded to this call, sending in short snippets about what they or their learners were doing.

Commendable as these teacher-driven initiatives doubtless are, there is no means of establishing whether these examples are having any positive impact on learning or whether they could be successfully replicated at scale to other learning providers. Nor is it within the remit of ETAG to establish what works and what does not: their job is to advise on removing barriers to the uptake of the disruptive technology which is not going to be created by teachers, but by people like Sir Michael Barber of Pearson, who retweeted the following by Bruce Nightingale:

Because the true “disruptive technology” that ETAG was tasked with considering lies in the future, not the past, because this is something that even Pearson only “envisages”, there is no empirical evidence to collect. When considering what to do next, it is not so much evidence that is required, as a priori reason and rigorous discussion. To this task, the medium of Twitter has (predictably enough) proved to be wholly inadequate.

With some technical help from Martin Hawksey (@mhawkesy), who has developed the pretty TAGSExplorer tool (screenshot above), I downloaded all #etag tweets from the opening of the consultation on 23 April through to the end of May. There were a total of 676 (excluding tweets about electronic luggage tagging systems in Africa, gospel churches in America, and the Edinburgh Tourist Advisory Group).

I allocated all of these tweets to one of the following categories:

| Repeat | Retweets and tweets repeated by the same sender |

| Social | “Looking forward to seeing you”, “Thank you for your comment” etc. |

| Announcement | Announcing ETAG and encouraging participation |

| Significance | Discussing the significance of ETAG, for example to say that it is well regarded, without directly encouraging participation. |

| Quote | Repeating material from ETAG base documents or the assumptions made by the group |

| Promote | Promote a third-party website, person or event (e.g by link) without making an obvious point relevant to ETAG. |

| Example | Providing an example of the use of ed-tech |

| Third party | Link to an external site, video, or document that implies a substantive and relevant point, even though it might take some time to work it out. |

| Pro Ed-tech | Making the general point that ed-tech and ICT is a good thing |

| Comment | Commenting on the ETAG assumptions or process (either negatively or positively). |

| Policy | Making a point relevant to the remit of the group (including by reference to and external site that is authored by the tweeter, but not to someone else’s site) |

| Discuss | Making a point relevant to the remit of the group in direct response to another tweet. |

I then grouped these categories into four super-categories, as follows:

| Non-substantive | Repeat, Social, Announcement, Significance, Quote. |

| Tangential | Example, third-party, pro-ed-tech |

| Process | Comment |

| On-remit | Policy, discuss |

I have already explained why I regard examples of the use of ed-tech as “tangential”. I hope it is also fairly clear why “pro-ed-tech” should be given this fairly lowly status. Some people may object that I have been hard on “third-party references” – but my rationale here is that it is very difficult for any discussion to absorb such references and evaluate them. For that, the referrer must state what they see as the significance of the reference – and where this happens, the tweet gets tagged with the higher status of “policy”. In some cases, contributors would make “policy” tweets and support them with other “third-party” references, which is fair enough.

Once I had marked up all the individual tweets, the following data could be extracted fairly easily. And I think it is useful because it gives a fairly good idea of what was really going on in the consultation. The full data on which these graphs are based is available in the “ETAG tweets 31 May” spreadsheet.

About three quarters of the tweets were “non-substantive” (mostly repeat tweets and announcements), one twelfth were “tangential”, one twelfth concerned “ETAG process” and one twelfth (only 59 tweets) were “on-remit”.

The graph for contributors showed a sharply narrowing pyramid, with only 23 contributors sending 4 or more tweets and only 6 contributors sending 10 or more tweets.

This low level of participation becomes all the more stark when you look at people making any sort of “substantive” tweet. There were only 7 contributors who sent 4 or more substantive tweets (including tangential and process tweets but exclude retweets and announcements – we are not even talking just “on-remit” here) and only 2 contributors who sent 10 or more substantive tweets.

This low level of participation becomes all the more stark when you look at people making any sort of “substantive” tweet. There were only 7 contributors who sent 4 or more substantive tweets (including tangential and process tweets but exclude retweets and announcements – we are not even talking just “on-remit” here) and only 2 contributors who sent 10 or more substantive tweets.

It should be noted that I only analysed the responses made on the main #etag hashtag and did not include tweets sent in to the individual discussion strands (#etag1a, #etag1b, #etag1c, #etag2a, #etab2b, #etab3a, #etag3b, #etag4, purely because of the amount of extra work this would involve and the relatively small number of tweets sent in (a total of only 2 to the “wildcard” thread). I should mention, though, that Oliver Quinlan made a significant number of on-remit, thread-specific tweets, which, had I included these threads, would certainly have ensured he was included amongst the substantive contributors.

I have not included here any analysis of the different substantive points being made. I may try and do and that at the end of the consultation—but in the meantime, Martin Hamilton has posted a useful interim round-up of key points and key references.

This low level of participation (as will already be clear from the absolute numbers we are talking about) applied to the great majority of members of the ETAG committee as well. Bob Harrison obviously took the main responsibility from drumming up interest, making large numbers of announcements, mostly repeat tweets sent on automated sender software, as well as a few tangential tweets that referred to third-party papers. One or two others of the committee did their bit but no-one contributed to any meaningful “on-remit” discussion.

This may have been a point of deliberate policy and I am sure it would have been a good one had the job of ETAG been to assess the empirical evidence that respondents had submitted. But, as argued above, that was never the nature of ETAG’s job. What was required if the Twitter discussion was ever to have worked was good moderators and chairmen to lead and ignite the discussion, ensuring that people who came on the list to assert one position responded to the objections of those who took up a contrary position.

This was never going to happen, not only because ETAG misunderstood its remit, but also because it was always apparent that the leading members of ETAG had already made up their mind. Exposing their assumptions to the scrutiny involved in an open debate seemed at least unnecessary, given that they seem to regard their views as beyond question.

This attitude is clear in the article announcing the formation of ETAG on Merlin John’s website, in which it is stated that such is the degree of distinction of the people represented on the ETAG committee that:

the question will be whether the politicians and their civil servants can keep up.It is also implied by the base paper, published on 23 April and already criticized on this blog. Having outlined its vision of the future, the base document introduces a “wild card” section by saying:

Most of the above is without surprise – there is very broad agreement that those are indeed directions in which we are heading. Indeed for most of them it is not hard to find existing examples of institutions that are already there, or largely there. However, our brief was to be bold and we don’t want to exclude the braver ideas. So for this section we would welcome braver thoughts and suggestions from all of you, to sit alongside our own thoughts.The mindset is clear: don’t bother questioning the substance of our report, on which we are already decided—but we may agree to include some of your “braver” ideas in an annex.

In particular, the attitude is apparent in the posture of Bob Harrison, with whom I have frequently crossed swords on this very issue, that he is not willing to engage in any substantive discussion with anyone with whom he disagrees. Bob is no shrinking violet and prides himself on being a provocative and outspoken critic of government. He dismissed the authors of the Royal Society’s extremely thorough Shut down or restart? report as spokesmen for an industry-driven cabal. The Education Funding Agency refused to share a platform with him, apparently on account of his outspoken criticism of the decision to close down the Building Schools for the Future programme. And this was also the reason, Bob claims, that an expert advisory group within the DfE that he chaired was closed down. But he is not at all happy when people criticise him. When I pointed out that claims that he had made in Computing Magazine about the OFSTED report, ICT in schools, 2008–2011, were untrue, he first refused either to correct or to defend his statement and then, when I persisted in arguing my case, threatened me with legal action. When I criticise his arguments in the context first of the FELTAG and now the ETAG consultations, he justified to a mutual colleague the fact that much of the real discussion is now going on behind closed doors by the need “to avoid trolls, spammers, abusers and self-publicists”. I will leave you, dear reader, to decide which of these categories applies to me, though it seems to me that Bob Harrison confuses personal attack with the sort of legitimate criticism that is essential if proper, rational debate is to occur.

While I am sure that Bob Harrison has all sorts of interesting views that should be taken into account by any policy group, and while I have no problem at all with debating these matters with him, the fact that he is so convinced of his own opinion and so uncomfortable with the to-and-fro of robust and open debate means that he is surely a most unsuitable person to be moderating an important government consultation.

For the moment, at least, it appears that the substance of the ETAG discussion has been moved behind closed doors, where it is occurring between self-selected groups of teachers who already agree with the assumptions made by the ETAG committee.

Reprise: the core argument

Putting all this stuff about process and openness and remits aside (all of which you might regard as peripheral), let me summarise again the essence of the point that I would choose to debate, if Bob Harrison and the rest of the ETAG group consented to debate with me. It would be that the technology matters.

I would certainly expect them to provide a substantive response to the long article I have written on this subject at “Its the technology, stupid!“. But, for all the detailed argument contained in that article, the basic outline of the debate can be summarised more pithily in the following short Twitter exchange. First, Nick Dennis summarises the position taken by ETAG, and is rebutted by Tom Bennett.

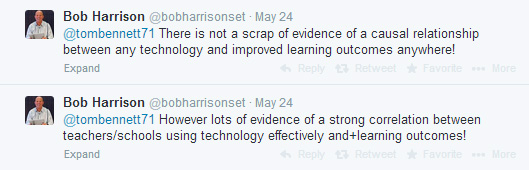

Then the position being asserted by Nick Dennis (and most of ETAG) was defended by Bob Harrison as follows.

The minor problems with Bob’s arguments here are that (1) the exclamation marks confirm the impression already discussed that there is no point in arguing because his mind is made up, (2) there is a sleight of hand between the use of “causal relationship” in the first tweet and “correlation” in the second—for a discussion of how these things relate to one another, see my “Private intuition: public expertise“.

But the main problem with Bob’s argument is that the distinction between the tool and the use of the tool is a bogus one. No one suggests (as the American NRA publicity material always claims) that guns go round killing people—they just claim that guns make it easier to kill people. No tool ever makes a difference unless there is someone there to use it.

Nor is it any surprise to find that there is a correlation between good teachers and good learning outcomes—we knew that already. The problem is what you are supposed to do when faced with a chronic shortage of good teachers, especially in shortage subjects.

The question as to correlations is, whether (using the sort of generic technology currently available) good teachers achieve better learning outcomes using this sort of technology than when they use other approaches to teaching—and the answer is that they do not. The Education Endowment Foundation report, The Impact of Digital Technology on Learning, concludes (p2) that:

Research findings from experimental and quasi-experimental designs – which have been combined in meta-analyses – indicate that technology-based interventions tend to produce just slightly lower levels of improvement when compared with other researched interventions and approaches.And the reason why, in Bob Harrison’s words, that

there is not a scrap of evidence of a causal relationship between any technology and improved learning outcomes anywhere!is not that the technology doesn’t matter but that it doesn’t exist—at least the education-specific technology that will make the difference.

Until this argument is properly addressed, neither the FELTAG nor the future ETAG reports will have any integrity whatsoever. You cannot deal with criticism just by pretending to be deaf.

An alternative approach to ETAG

I have already published my proposals for what I think that ETAG should say—but those are just my opinions and any serious recommendations to government requires not only useful inputs but also the right process.

So I shall finish this article by outlining how I would run what could have been—what just conceivably might still be—a really great opportunity to hammer out an effective new approach to ed-tech, that would bring genuine transformation to our education.

I would set the following five questions, which address the ETAG remit and do not encode any preconceptions as to what the answers should finally be.

- What are the key problems in education that technology can help to solve?

- How will technology be used to solve these problems?

- To what extent have these solutions already been demonstrated to work?

- What and how significant are the main barriers to deploying these solutions?

- What policies should government adopt to overcome these barriers?

I would break the consultation into twelve weeks, address each question by a four-stage process, staggering the introduction of each new question by one week and leaving one week for thinking time between each stage. The stages would be:

- Invite white papers, each with a word limit of 1,000 words (accompanied by tweet-able key points), to answer the current question, with all white papers being published on-line immediately they are submitted.

- Invite each participant to submit a critique: a single 1,000 word paper addressing as many of the published white papers as they liked (again, with tweet-able key points).

- Invite the original authors of the white papers each to submit a response which acknowledged criticism and clarified or defended their papers against the critiques that had been directed at them.

- Publish a summary of the main white papers, critiques and responses, highlighting the main differences of opinion and the different options for action that had been proposed.

Someone who participated fully in the process might end up writing 5 white papers, 5 critiques, and 5 responses, which might come in at anything up to 15,000 words.

This would result in a schedule along the lines of the following table, with the five questions along the top axis and the 12 weeks down the left hand axis.

| Q1 | Q2 | Q3 | Q4 | Q5 | |

| W1 | Invite white papers | ||||

| W2 | Invite white papers | ||||

| W3 | Invite critiques | Invite white papers | |||

| W4 | Invite critiques | Invite white papers | |||

| W5 | Invite responses | Invite critiques | Invite white papers | ||

| W6 | Invite responses | Invite critiques | |||

| W7 | Publish moderator’s summary | Invite responses | Invite critiques | ||

| W8 | Publish moderator’s summary | Invite responses | |||

| W9 | Publish moderator’s summary | Invite responses | |||

| W10 | Publish moderator’s summary | ||||

| W11 | Publish moderator’s summary | ||||

| W12 | Publish conference prospectus | ||||

| ? | Hold conference | ||||

| ? | Write up policy recommendations | ||||

At the end of the consultation period, all of the moderators’ summaries would be combined into a single prospectus for a conference that would be based around a series of adversarial debates and consensus-building seminars. Given some funding, expenses for attendance could be offered to people who had made substantive contributions during the preliminary phase.

Some appointed participants would be primed to take part, but the process would be open to all-comers. The schedule is deliberately gruelling and this would impose a degree of self-selection. The resulting consultation would be neither a superficial vox pop nor a reflection of the views of any special, closed interest group, but rather a demanding and stimulating debate that I believe would attract experts and stakeholders from around the world.

I would propose that none of these expert-advocates should be responsible for writing up the final policy recommendations, which would more appropriately be done by the sort of people that you would find in a political think-tank. Their job would be to reflect the consensus that emerged from the debate, knocking the results into a politically acceptable shape, avoiding the charge, real or imagined, that they were being swayed by private enthusiasms.

Having watched over twenty years one disastrous, misconceived ed-tech initiative follow another, I do not expect that the world will suddenly become sane. But one still cannot help imagining what it might look like if such a thing were to happen.

I look forward to your comments below.

You make a very good case. The sources of innovation in technology in many spheres are well addressed in academic work. Dorothy Leonard’s The Wellsprings of Knowledge is a particularly good example. There are many others who demonstrate that innovation doesn’t just come from the producer of technology. Eric Von Hipple has shown that users in the surgical instrument business provided many of the ideas that led to innovation. Such thinking is behind Lego’s remarkable fruitful engagement with its customers, that has created so many product innovations. I agree that educational technology producers need to be part of the discussion, but they won’t have all the answers.

Hi Alex, thanks very much for your comment – and I agree with you. Once we put the megaphones aside and start to get down to the detail, we will find that the relationship is a very complex one – and certainly one in which teachers will play a very important role, both as consumers (which in an efficient market, are the ones that drive innovation) and also as the people initiating innovation. And I think that there are all sorts of shades along the continuum regarding how this might happen, from early adopters & champions, through to managers or advisers to start-ups and other sorts of consultants.

Steve Walker (@steve_walker) challenged me recently on Twitter as to whether I was advocating a take-over by media and tech companies – and although I think these people have some expertise that is relevant, my answer is no, because the key aspect of the ed-tech industry is pedagogy – the particular sorts of interaction and sequencing that is relevant to the classroom.

The problems, though, that I think teachers on their own have are (a) sometimes in systematising their intuitive knowledge, and (b) in replicating their expertise. I am not doubting that lots of really important, innovative things go on in all sorts of classrooms – but teachers do not have the expertise to create a replicable product / practice (I see them as being closely related).

For example, when I started teaching History at William Ellis, a boys school in North London, in 1991, I got them to do their GCSE History as a Hypercard multimedia presentation. They had to argue for or against the proposal that the British Generals on the Somme were donkeys. The academic merit of the medium is that it forced them to cut out the waffle and focus on making an argument, with each slide containing a pithy combination of analysis and evidence. I think it worked well and encouraged all sorts of interesting questions, like “this piece of evidence doesn’t fit my case – do I have to include it?” But when I left the school, no-one repeated the exercise because you needed to be an IT enthusiast to run it properly.

If you create the right market infrastructures to encourage innovation, I would be very surprised if you did not find a lot of those ideas bubbling up from current or ex-teachers.

I will try and find Dorothy Leonard – and thanks again.

Crispin.

I agree with many of the points about the manner in which the FELTAG reports was ‘curated’, and the way almost all involved have ‘self-selected.

However, I feel that there are 2 main reasons why we have so little evidence of large scale impact from educational technology used in FE, and they are both political.

1) Internally, colleges seem loathe to be honest with staff and state that funding will continue to fall and that teachers will either have to work harder, or find new ways of managing learning to improve efficiency.

2) Externally, the diverse regulatory bodies give no clear outline as to what is allowed in order to improve efficiency.

There are countless examples of FE providers carrying out small projects that have a positive impact on learners on the JISC Delicious feed, but the reason these have failed to grow is given above. Fundamentally the DFE has provided little freedom for colleges to innovate.

My simplistic answer to it all would be to say to colleges and the profession, “We don’t know how it will work, but we believe/know it can work.”. Funding should be fixed and transparent for a whole term of government and so should achievement targets. Then colleges could look at the landscape strategically and decide how they wanted to approach it. Then we really would find out what worked.

Thank you Charlie,

We agree that square one in this debate is to be honest and admit that (though there is plenty of reason to believe that this is worth doing) no-one has any evidence yet as to how it will work. Any recommendations must be completely agnostic about “what works” and I totally agree with the formula you use to express this.

My main point is we cannot go on making the assumption that the technology doesn’t matter – and this point applies to all sectors.

Your point about removing regulatory barriers is different, and as I do not have any experience of FE, I couldn’t really comment on the detailed aspects. But it strikes me as being spot on the remit and something that FELTAG ought to have really majored on.

I agree with the general point, as I think would Ministers, that the whole education system is too driven by regulation. We should have an education system that is driven more by outcomes and less by regulatory meddling. The FELTAG recommendations aim in precisely the opposite direction, recommending more government projects, mandating this sort of assessment and that sort of pedagogy. Null points, IMO.

I think we would both approve of a sort of outcome-driven ecosystem, that provides both transparency and autonomy to providers of education. But I think it has a problem, which is the difficulty in measuring outcomes/learning. Many people say that the exams are too narrow, value-add metrics contentious, targets distorting. So in schools, the government has relied on OFSTED but this has come under fire for assessing against unproven pedagogies and is really part of the regulatory apparatus. But as that is dismantled, we need an outcome-driven alternative way of measuring performance.

So the key to all this, IMO, both to enable integrated ed-tech software and to enable the sort of autonomous, innovative, outcome-driven ecosystem, is to have a reliable way of measuring learning. And that comes down to the “meaningful data”. Which is what Bruce Nightingale/Michael Barbour’s retweet is all about.

I think Bruce puts his finger on it.And from the point of view of the politics of all this, I think that ed-tech is MUCH more important to Ministers than they realise. It is not just something that they need to help along at the margins – it is actually the key lever for change in achieving their primary political objectives.

Thanks for the comment. Crispin.

With FE one of the things that we will come across quite a lot is the ‘interface’ between the teacher, the student and the technology. One of the things that characterises a large proportion of FE students is a dissatisfaction/failure in traditional education, and they will need a great degree of support into the use of any eLearning

One question I’m currently thinking about is whether Moodle is, or can be made fit for purpose for FE. Can it be socialised enough to make it an engaging learning experience, and provide meaningful data on learning?

Another thing the sector needs to do is have a look at Tin Can now that the first open source LRSs are appearing. So I do agree that the technology itself will have a massive impact, and we certainly need to be moving away from some of the technologies and approaches that eLearning has been built on traditionally (SCORM and other such ‘content’ rich approaches).

I think the difficulty in learning to use the tech (which means extra strain on teaching time) is one reason why we need industry strength software that (in step with most of the current trends for the consumerisation of tech and the improvement of user interfaces) needs to be really easy to use.

I have mixed feelings about the social dimension. I agree it is very important but is always in danger of spinning off-track. In my view, the social networking aspect needs to be structured and directed to the task in hand, which is why generic platforms IMO will never work, be they consumer tools like Twitter or education-specific tools like Moodle.

To put it another way, in my slide at https://edtechnowdotnet.files.wordpress.com/2013/11/slide-220.jpg, there are two levels: the learning platform level and the learning activity level. Things like Moodle put social interaction at the platform level, when IMO they belong at the activity level. For two reasons: (1) because the *nature* of the interaction will change with different activities, and (2) because sometimes you want interaction and sometimes you don’t want it at all.

I am very interested in TinCan, and was involved in its pre-TinCan incarnation, which was the Run Time Web Services project in LETSI. But I think that it is in its infancy still and has not dome very much beyond sorting the transport layer – how you move the data about. The real difficulty lies in “what data do you want to move about – how do you measure learning?” – and this is a whole new layer to the problem that will not have a one-size-fits-all solution. I don’t think you will get real innovation in software development without parallel innovation in data interoperability standards.

I agree with your comment on “content”. You might be interested in my presentation to ISO/IEC SC36 on “What is content?” at https://edtechnow.net/2012/04/03/what-do-we-mean-by-content/. In my view, we should completely change what we mean by “learning content” to refer to activity, not information. I remember when I first heard the term used, probably in about 1996, and thinking to myself – “you guys have completely missed the point”. Still have, IMO.

Hi Crispin.

There is plenty of evidence of the high impact of technology in education; it is the evidence that schools have used to justify to themselves their continued high investment in technology. This hasn’t been formalised because for years Becta and others looked for causal relationships between technology and better learning, but there aren’t any. Technology acts on learners, alongside all the other aspects of pedagogy brought to bear by the teachers. It is the higher engagement of the learners and their ability to do things not achievable without technology that causes the higher achievement. Vanessa Pittard, then Head of the DfE technology Policy Unit, clearly identified this when speaking to a Naace meeting in 2011 when she said “This is not about technology in its own right directly producing better outcomes but that of technology enabling better practice and this better practice impacting on outcomes; the question then is what practice using ICT produces most impact, particularly in relation to difficult to tackle educational issues.” – which chimes well with your suggested approach.

The biggest ‘difficult to tackle’ education issue is motivating children (and college students) to want to learn. When schools succeed in doing this they achieve startling results no matter what disadvantages pupils may have. And some schools are doing this all round the world by following their own instincts and not being beholden to government dictats that can get in the way. For schools leading the way in this they often have more in common with schools hundreds or thousands of miles away than with schools down the road that are stuck in the past. For research on this published by Mal Lee and myself – A Taxonomy of School Evolutionary stages – see http://schoolevolutionarystages.net. There is a ‘virtuous spiral of improvement’ that we have identified in schools gaining the Naace 3rd Millennium Learning Award where the schools change pupils’ psychological approach to school and learning, developing far greater degrees of ownership of their learning and developing much more collaborative and supportive relationships between everyone in the school. Technology is the key enabler of all parts of this spiral. This level of impact from technology is a huge multiplier of all the ‘smaller’ impacts that are possible from specific uses of hardware and software, which themselves become more powerful once the thirst for learning is energised.

As to the role of industry, the whole process of innovation with technology in education is now being led by schools. Policy makers and educational researchers are now last in the line to see and to understand the innovations schools and pupils are making. So it is necessary for companies and schools to work very closely together. Generic technology created for other markets does not necessarily work to full advantage in education until it can be fitted into the educational environment of teachers’ pedagogy, teacher-pupil relationships and collaboration between learners, which often happens very widely, for example Mathletics. Apple discovered this with the iPad and Apple TV that have needed considerable further development to make them work properly in classrooms.

And a final word; if you or any other company are looking to assess the impact of technology on learning I suggest you should look at what it does to how the learners act rather than to test scores. Teachers are forever mentioning the ‘engagement’ that technology produces, but I have yet to find any research on the positive impact of technology on pupils’ desire to learn and on their learning activity – yet the schools know this. As Rod Dean, Head of St Peters’ Bratton says in the video at http://www.broadieassociates.co.uk/page65/page52/page55/files/%20Creating%20better%20learners.mp4, “You see it in the energy of the children”, and “They are doing more of what we would call learning in their own time”. And the other teachers in this video clearly tell you what the impact of their use of technology in the school is.

Roger

Hello Roger and thanks for your comment.

We have both been around in this game for a long time and I have heard these arguments many times before, from you and others – but I have to say that I disagree pretty fundamentally with almost all of them.

I am well aware of Vanessa Pittard’s position here, which I have heard myself on many occasions and which reflects the position taken by a number of researchers. But I am afraid to say that as far as I can understand it, it seems to me to be complete gobbledegook. I can’t imagine how you kept a straight face while you wrote that technology has impact but no causal relationships: impact and causal effect are exactly the same thing.

Causal effect is still causal effect whether it is direct or indirect. In fact, again, there is no difference because the real world is made up of continuums (or should that be continua?) and floating point numbers, not integers, objects and events. If I say that throwing a brick at a greenhouse causes the glass to break, I am ignoring all the intermediate factors, such as the trajectory that the brick follows through the air, gravity, air resistance, the molecular structure of the glass etc.

If, in the example you give, you say either that:

technology -> motivates students -> better learning;

or that

technology -> better teaching practice -> better learning;

then in both cases “better learning” is a consequence of “technology” and “technology” is therefore a cause of “better learning”. The existence of the intermediate stage is of no consequence (if that doesn’t confuse the issue).

In both cases, these are not facts but theories, and until there is quantitative evidence to support them, they are theories unsupported by evidence.

I agree that motivation is important and desirable but certainly not that it is sufficient. In fact, I don’t even agree that it is necessary. There is strong survey evidence which suggests that as a generalisation, children in Korea hate school, yet year after year they come at or near the top of the international comparison tables. That evidence (unless it is somehow factually incorrect) *is* sufficient to show that motivation is not the only, maybe not even the primary, consideration.

Unless, of course, you say that Korean children are “motivated” by being soundly thrashed if they don’t do their homework – but then we have to be clear whether by “motivation” we are talking about “doing the work” or “being happy”. You were, I think, and the people on your video are certainly talking about “being happy”.

In regard to being happy (engergetic, enthusiastic etc), the anecdotal evidence from teachers is highly unreliable. Of course teachers like to have classes full of motivated children: they are much easier and more enjoyable to teach and one feels good about the fact everyone is enjoying themselves. But are they learning anything?

The video interview you reference is, I think, rather revealing here. I transcribe what I see as the key part from 1:56.

Are they significantly better learners coming out of primary schools than they used to be?

Yes, because the’re independent, they’re looking for themselves, they’re not spoon-fed, they’re not just relying on one person to give them the information and filling up their vessel: they’re filling up their own vessel, looking for themselves, and its a life-skill.

I make two points.

1. I agree that being an independent learner is a life-skill: but this is to justify “independent learning” with reference to your learning objectives and not with reference to the effectiveness of the approach as a pedagogy. You might have learnt to look things up on Google but end up knowing bugger all Maths.

2. Learning is not primarily about “filling up your vessel”: its not about someone else filling up your vessel and its not about filling up your own vessel either. And so this interview corroborates the point that I have been made several times on this blog, that the doctrine of “independent learning” emphasises the accumulation of information rather than the development of capability.

It is a point that Diana Laurillard makes more effectively than I could in her recent book, “Teaching as a Design Science”. I quote her for example in “Why teachers don’t know best” at https://edtechnow.net/2013/08/27/blind/.

“Technology opportunists who challenge formal education argue that, with wide access to information and ideas on the web, the learner can pick and choose their education—thereby demonstrating their faith in the transmission model of teaching. An academic education is not equivalent to a trip to the public library, digital or otherwise”.

I suspect that the children at this primary school are probably very happy, running around finding things out, and everything is probably very harmonious – and they might develop some capabilities along the way. But doubt that they are making so much progress with their abstract Maths.

As a supplementary point, I think that independent project work is likely to motivate 10 and 11 year olds (who are delighted with the trust place in them and keen to please their teachers) rather more effectively than 13 and 14 year olds. Which is why the TestBed trials did show a small positive effect for the sort of independent project work that you describe at the top of KS2, but none at KS3 or KS4.

In summary, my position is as follows:

1. The approach to “independent learning” and the emphasis on motivation rather than process does not generally improve learning outcomes.

2. The quantitative evidence supports this position.

3. The anecdotal and survey evidence that reflects teacher perceptions is unreliable.

4. The argument that we know it works despite the lack of quantitative evidence is based on a seriously flawed understanding of statistics, research and causality.

5. Given (a) the fact that your views represent the current ed-tech consensus, and (b) that I believe that they are fundamentally mistaken, you would not then expect me to agree that “the whole process of innovation with technology in education is now being led by schools”. I do not accept that there is any genuine progress being made, or in consequence that there is any genuine innovation.

I *do* accept, by the way, that the sort of education-specific cloud service offered by Mathletics offers a promising example of the route forwards and I accept that many commercial services will spring *out of* schools – see my discussion above with Alex Jones.

That is why, in the early 2000s, when we were discussing these matters on the EEF, I agreed wholeheartedly with Becta’s new chairman, Andrew Pindar, that we needed a more dynamic ed-tech industry; but fundamentally disagreed with him that the way to do this was to sweep away the cottage industry that provides one of the key routes for innovation to emerge out of schools.

So I hope that might be at least one point of agreement between us. And thanks again for the comment, which I really value, even though I might disagree with significant parts of it. And do come back on me to carry on the debate if you like. I think it is something that we need much more of.

Crispin.

Crispin, one of the most well informed and insightful commentaries on this issue I have read in a very long time. As someone whose been voicing his frustrations at the complete and utter disconnect between investment and educational benefit, for about a decade, I would add a couple of thoughts to this important debate.

What business operating in a service industry would survive for ten minutes, if it responded and reacted to every technology novelty and fad urged on it by customers, without a thorough business planning and budgeting exercise that focused on real customer benefits? Yet schools have been trying to do this for 15 years, and under pressure from the industry and the zealots, continue to do so.

On data. My only caution about this is that technologists tend to have a kind of absolute faith in the power of data which experienced teachers don’t. It’s no accident that RaiseOnline evolved from the Fisher Family trust data set. Real teachers know that the assessment data they use is untrustworthy, subjective and variable between institutions, regions and countries. See this piece of research for teachers’ views on data.

http://www.southampton.ac.uk/education/research/projects/data_dictatorship_and_data_democracy.page#publications

Dear Joe,

Thanks very much for your kind comments.

I entirely agree with you on data. When we get down to having the right conversation, there is much to be said about this.

Thanks for the link. I thought the dichotomy between private and shared use of data was very interesting. My “Stimulating Innovation” paper makes reference to the benefits that would accrue from better shared use of data (in terms of team teaching) and I think that this will only happen if we allow innovative new data formats to bubble up from teachers and innovative companies, rather than being imposed top-down by officialdom.

Interested (and aware) too of the point about accountability muddying the water. I think this is something that teachers are just going to have to get over, because as I suggest in my answer to Alex Jones, effective outcome-driven accountability is an essential concomitant of more autonomy. Teachers must either be told how to do every aspect of their job, or they must expect to be held accountable based on outcomes. But I think it might help if the data was seen *primarily* as relevant to a shared teaching process and only secondarily as a matter of accountability.

Part of that sensitivity is I think due to the assumption that good performance on the part of the teacher is down to personality, respect and relationships. While the provision of good ed-tech will make the whole performance issue less personal, less individual and in many senses less stressful for teachers.

I think that much of the data that we have at the moment is based on “authority” – it tells us we should pay attention to it because it is exam board data or Ofsted data etc. The reliability of summative exam data is, I suspect, something of a can of worms that no-one dares to open but on which teachers are regularly judged. You can quite understand why teachers regard this as a sort of tyranny which ignores what they feel are important aspects of the student’s progress, like motivation and attitude.

Data will become more useful and more trusted, I think, when we have the analytics system that can show us the level of confidence that the data commands on the basis, not of the authority of the issuer, but:

1. on its predictive reliability;

2. on the existence of clear, shared understandings about what (sort of performance) is being predicted…

And on the basis of those sorts of analysis, we will derive judgements not of the *authority* of the data issuer but of the *reliability* of the data issuer. and that will completely revolutionise our entire assessment system. It will, for example, allow officialdom to place much more reliance on the judgement of teachers (e.g. with respect to motivation and attitude) where that judgement is shown to carry weight in terms of its predictive reliability.

I think there is another misconception that sees data as something that is passed around in spreadsheets and needs a statistics degree and a spare afternoon to understand. When in fact the whole internet is data, which is “surfaced” through different human-viewable interfaces.

I really look forward to having this conversation in full. It is of course one of the conversations that ETAG should be having but isn’t.

Crispin.

Crispin,

Replying to your reply to me first rather than Joe’s post, what I may not have expressed clearly about the causal relationships between use of technology and better learning/higher achievement, is that it is impossible to RESEARCH and PROVE the causal relationships except in very limited circumstances when learners are learning alone with technology with no teacher or peer input. Of course there are causal relationships and it is possible to measure changes in learning activity that result from use of technology. But the best that it is possible to do in linking use of technology to rises in learning achievements is to get correlations.

In the Compelling Case Studies work that I did with St Peters Bratton Primary and Broadgreen Primary School the Heads of both schools had the data to show the very considerable rises in achievement, way beyond previous expectations, that had occurred during the period over which the teachers and pupils had been using technology. The PDF case studies that I produced attempted to quantify changes in learning activity that might have been the activities instrumental in creating those rises in achievement. It was possible to see many-fold increases in the activities which the teachers professionally judge to have greater impact on achievement, for example the 7-fold increase in revisions of their creative writing that happened with the pupils at Broadgreen Primary. Anna McDiarmid in the video (on my website) clearly explains the role of technology in enabling and no doubt causing this increase.

Turning to Joe’s post, he is absolutely wrong in saying that there is a complete and utter disconnect between investment in EdTech and educational benefit. There may be in some schools who just go out and buy iPads or other tech with no clear idea how to use it, but there most certainly is not a disconnect in the many schools that are investing on the basis of measured changes in learning activity that they have every right to decide are the changes that are creating the raised achievement they are also measuring.

To actually know whether increased time on task or better ability to discuss work with peers or ability to respond to teacher feedback during the course of a piece of work instead of just at the end when it is marked are the things causing a rise in achievement, it would be necessary to get inside pupils brains, which is of course impossible. Learning happens in a non-linear way and it can be a single short conversation that helps a pupils to click a bit of learning into place, after they have struggled to learn something despite good effort over a period of time.

The data that I would be interested to see is “learning activity profiles’ that describe the kinds of activities and interactions that good learners/achievers have, compared to the profiles of poor learners/achievers. Then we would start to see the mix of activity and interactions that appear to work best. Those profiles would also say a great deal about the pupils’ levels of engagement and motivation that they brought to bear and which stimulated the activities and interactions.

Regards

Roger

Hi Roger,

Thanks for the clarification and further thoughts.

On correlation vs causation, if you can show correlation then you can show causation. I think this is an issue that is widely misunderstood, mainly because of the rather misleading aphorism “correlation does not imply causation”. I wrote about this recently at https://edtechnow.net/2014/02/06/private/#section_1_1, so perhaps you might like to read that short piece and come back to me if you don’t agree.

On the question of restricting the number of variables that might confuse the research, I do not agree that you necessarily have to pare away every possible confounding variable to study learning in a situation in which you have only the single variable you want to measure. An alternative approach is to study enough cases in enough varied circumstances that you average out the variables. If you were to have groups of 1,000 learners being taught by 50 teachers, then the distribution of different types of teacher within the groups would be pretty similar. You can never get everything to be exactly the same, of course, but you can get things to be similar enough to ensure that the results are valid within a margin of error. And then, when you have got the results and you find they are interesting enough to warrant it, you need to repeat the whole experiment to check it out.

I would agree that this is demanding. There was a brief Twitter conversation between Lord Lucas and Dylan Wiliam – I think in September at the time when Michael Gove announced his interest in RCTs – in which Dylan Wiliam said you could never get *enough* data to do this properly. But you *could* get enough data if you had data-driven ed-tech that was capturing this sort of learning outcome data, automatically, all across the country, as a routine part of the teaching process. Subject (as Bruce Nightingale has been pointing out in the ETAG Twitter stream) to getting the public to accept the use of that sort of data in that sort of way.

In short, I accept that there are issues around isolating variables in the classroom, I do not accept that it is impossible, either in theory or in practice. Because this sort of research *has* been done at the scale that is required to make it work – e.g. the ILS trials in the late 90s, the ImpaCT2 trials and the Becta Test Beds – and the results are remarkably consistent: very marginal effects, probably a little more so at KS2, but no significant effects at KS3 or KS4. If you read the Education Endowment Foundation Report, that is their conclusion and basically I think it is everybody’s conclusion. Except for some, that is the starting point for the sort of fudging that we discussed in the last iteratation of our discussion.

I entirely agree with you that the ability of a straightforward word processor to encourage review and redrafting is, at least in pedagogical theory, extremely interesting. But for all the reasons discussed above, I cannot accept that you can be so confident that this is raising standards of learning on the basis of teachers’ professional judgement, when this improvement in learning outcomes cannot be shown by quantitative research. As Tom Bennett has shown in his recent Teacher Proof, teachers’ professional judgement cannot always be relied on.

This is a technique that I have used myself, requiring sixth form students to hand their essays in word processed format and using Microsoft Word’s tracked changes feature to hand them back with comments and suggestions for redrafting – and then to take them in again. But for a teacher, getting through all your marking in good time is a pretty big challenge at the best of times and taking students through a guided redrafting exercise effectively doubles or triples your workload. You also find yourself chasing students for their redraft at the same time as you are chasing them for the first draft of their next essay. Its just not sustainable. Even if you do the whole exercise in class time, you still have a real pressure on teacher feedback – which in my view is what is always a hugely scarce resource. Which is why no-one has been able to get Assessment for Learning to work at scale. So I never found it sustainable and in my personal professional judgement as a teacher, I doubt, long-term, it is for anyone else either. Which is why it doesn’t show up in the research data.

I agree that learning happens in a non-linear way (and it is my private hunch that once we have good data on it, we will find that, properly used, the summer holidays can be made educationally very valuable for this reason) – but that is something that (like teacher quality) can be averaged out by numbers and longitudinal surveys. I am not an expert statistician – but I think there are enough examples around to show that you can do a lot with good statistics and enough data.

I agree that what I call “capability” (the thing that increases as we learn) is something that cannot be observed directly because it is inside the black box. But this does not mean that it cannot be observed indirectly. “Virtue” said Aristotle, “is a disposition to do virtuous acts”; so is capability a disposition to produce certain sorts of performance. Observe the performances and you can infer the capability.

So I completely agree with you that mapping capabilities and performances and observing the correlations between them is what learning analytics promises to do – and that will be hugely interesting. But we will only get the data we need in the quantities we need when we have interoperable, data-driven instructional software to generate this data automatically (under teacher control).

One final point. I remember you saying some time ago, when you were working with Frog (2009 perhaps?), you said that it wasn’t about using the technology to improve learning directly. That, you said, was still too difficult. But what it was about was increasing the efficiency of the institution by better information flows, use of email etc. I can see too that the ethos of the school is very important; and perhaps for the teacher in some senses to be “leader of the gang” – to have the peer culture on their side. Which is a reason why getting involved in the social networking life of the student is also important (in the same way that I saw a Channel 4 Dispatches programme last night on how junk food advertisers are infiltrating children’s social networks). If the baddies are they then the goodies should be there too. So all that stuff I agree may be useful tools in the hands of schools who want to give a strong lead, both in terms of ethos and organisation. But I also agree with what I understood you to say – none of that is really ed-tech: it could be useful, properly handled by dynamic school leadership (and that is a big if) but it’s peripheral to the core business of instruction.

I think we are now on the cusp at which real ed-tech is no longer too difficult – and I think handling that revolution which is now around the corner is what ETAG is and should be about – not doing all the peripheral stuff that we’ve been doing for 10 or 20 years, which at its best has been inspiring but overall hasn’t made that much difference. I think that would be to get stuck in a rut and miss the really big opportunity that is round the corner and is going to arrive, quite suddenly really quickly.

Thanks as ever for the comment,

Crispin.

The published remit is written in casual language:

“… and we have also been asked to identify any barriers to the growth of innovative learning technology that have been put in place (inadvertently or otherwise) by the Governments, as well as thinking about ways that these barriers can be broken down. ” Source: http://www.heppell.net/etag/

Imagine that ETAG’s mission is to evaluate the merits of an open source, cloud based education data infrastructure designed to address a set of common problems in schools. Namely, the lack of data interoperability between various databases and software systems that schools utilize coupled with the ongoing challenge of spending public money to maintain these legacy, proprietary IT systems.

That makes for a lot of bureaucratic inefficiencies with teachers and staff manually downloading from (say) SIMS, uploading, re-entering student information, SEN data, timetables, marks/levels etc into various applications like Excel. In short, it’s difficult to build a full profile on students and to track and support their progress. This is another barrier that needs breaking down particularly now that education is [supposed to be] data driven.

Across England and Wales ‘data driven’ is a code for ‘levels’ which the DfE have decided are no appropriate as an accurate global measure of pupil achievement, but we do have standardized testing via end of KS2 SATs and KS4 and KS5 public examinations. The data from these tests will inform the attainment 8 measure that will be used (in part) to judge schools effectiveness.

We are witnessing a number of technology interventions that schools are adopting to support learning and teaching (hardware – tablets, iPads, chromebooks, BYOD (i.e. shift the cost to parent/carers and the software, apps being accessed) alongside legacy VLE’s. These devices tend not to “talk” to one another nor to the student information systems that store pupils’ education records. VLE’s cant share data or even courses between different vendors. I can export a course from Moodle but cant import into Blackboard, Frog etc. I can’t share quizzes between different systems. The absence of standards discourages developers from producing content for so many different systems. This is a barrier to learning.

In between SAT’s and GCSE’s how do we track pupils learning across the nation? Can we track learning? How can we track learning? Does the field of ‘learning analytics’ offer real possibilities of opening up fresh insight into ‘how’ people learn? Are there issues of privacy to take into account? Will we have an evidence base, as opposed to anecdotal base from which to assess and critique?

Pearson are an important publisher of educational materials in both new and old forms. The ETAG core membership lacks commercial expertise and insight into these newly emerging fields – check out Pearson’s research ‘Impacts of the Digital Ocean on Education ‘. Given the recent court ruling on Google being a ‘data controller’ and the implications for privacy, keeping private and confidential the data records of our young people must be a priority concern. Yesterday, 2nd June 2014, Harvard University published ‘Framing the Law and Policy Picture: A Snapshot of K-12 Cloud Based and Ed Tech & Student Privacy in 2014′ -http://cyber.law.harvard.edu/publications/2014/law_and_policy_snapshot